ESnet’s Confab23 Takes a Deep Dive into the Integrated Research Infrastructure

By Bonnie Powell, ESnet Communications Lead

A selection of photos from Confab23. Credit: Deb Lindsey Photography and ESnet staff. (See more photos.)

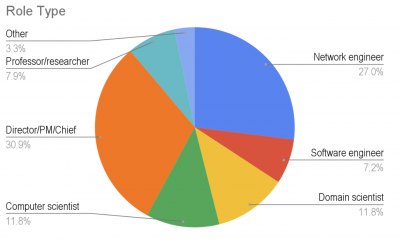

The Confab23 gathering in Gaithersburg, Maryland, was the place to be in mid-October for Department of Energy (DOE)–funded domain scientists, computer scientists, network engineers, DOE program managers, and user facility staff interested in co-designing the future of integrated science together. Confab23 was the second such user-community gathering convened by the Energy Sciences Network (ESnet), the “data circulatory system” for DOE scientific research, and it served as both a showcase for the latest ESnet advances in new technologies and services and as a forum for other speakers to share their own innovative multi-facility approaches and projects.

More than 160 attendees and speakers, representing 38 participating national laboratories and other organizations, traveled from 22 states to engage in two-plus days of meaningful conversations, idea exchange, and collaboration.

This year’s theme was “Co-Designing the Future of National-Scale Integrated Science and Networking,” inspired by the DOE’s Integrated Research Infrastructure (IRI) program and informed by the Office of Science’s (SC’s) recently released IRI Architecture Blueprint Activity final report. Researchers across the Department of Energy today routinely collaborate across multiple facilities, utilizing instruments, high performance computing, artificial intelligence, and other tools and sharing massive, complex datasets. IRI focuses on seamlessly integrating scientific facilities, data management, and computing to power scientific discovery.

“Confab really demonstrated the power of this interconnected vision of an integrated research infrastructure and the ways in which the research communities are embracing it— and who better than ESnet, which is already working with so many Confab attendees on early IRI prototype projects, to facilitate the conversation,” commented Amber Boehnlein, associate director for computational science and technology at the Thomas Jefferson National Accelerator Facility (Jefferson Lab).

“It was a privilege to engage with our broader science user community,” said Inder Monga, executive director of ESnet. “ESnet is not just a network; it is also a critical enabler for scientific discovery and innovation. The discussions at Confab have given us a better understanding of their evolving needs and will guide our efforts to provide world-class services and support in an IRI-driven future. We’re excited to bring everyone back together to discuss our collective progress.”

Setting the Stage

The first Confab, in 2022, followed the unveiling of ESnet6, the latest iteration of ESnet’s leading-edge network in support of scientific research, which was specifically designed to support multi-facility collaborations aligned with IRI. And on the very morning of Confab23’s first day, DOE announced the formation of its first new user facility in years, the High-Performance Data Facility. The new HPDF, to be led by Jefferson Lab in partnership with Lawrence Berkeley National Laboratory (Berkeley Lab, which manages ESnet), is expected to be a cornerstone of the IRI program.

ESnet Executive Director Inder Monga welcomes Confab23 attendees

Confab23 began with an evening gala and remarks on “The Future of Networking, from ESnet6 to Quantum” by Monga, who is also principal investigator for the quantum communications networking project QUANT-NET. Monga led the audience through ESnet’s evolution from a simple information highway, in which “whether you were a truck or a car or motorbike, you took the same ramp to join and exit,” to one that must constantly innovate in order to accommodate both Ferrari drivers and “those of you with a goods train with four engines chugging cross-country every hour of every day.” Quantum networking, meanwhile, is in its infancy, he said — not unlike being “back in 1986 thinking streaming video will never happen.”

The next day and a half were devoted to Confab’s IRI theme, first through a DOE programmatic macro lens, followed by detailed presentations by scientific researchers showing the kind of work that will most benefit from IRI, and concluding with discussions of what initial implementations might look like.

Advanced Scientific Computing Research (ASCR) Facilities Director Ben Brown kicked off Confab23 with a keynote on the Integrated Research Infrastructure (IRI) program.

DOE’s Advanced Scientific Computing Research (ASCR) Facilities Director Ben Brown, who has championed the IRI program, opened the conversation with a compelling overview of its development process, scope, and next steps. Infrastructure is more than hardware, he said: “It’s about empowering people, and it’s about data.” He emphasized the double meaning embedded in IRI’s name — “Integrated Research Infrastructure” (infrastructure supporting collaborative, integrated research) and “Integrated Research Infrastructure” (research infrastructure that’s been intentionally planned and linked). And while acknowledging the very real challenges of changing DOE culture, Brown emphasized IRI’s benefits for researchers — dramatically reducing both time to insight and complexity — and for institutional leaders.

Left to right: Xiaofeng Guo, Nuclear Physics Computing program manager; Resham Kulkarni, Biological and Environmental Research’s Biological Systems Science Division program manager; Dava Keavney, Basic Energy Sciences X-ray and Neutron Scattering Facilities program manager; Michael Halfmoon, Fusion Energy Sciences Theory & Simulation program manager; and Eric Church, working on Computational HEP & AI/ML for High Energy Physics.

After his keynote, Brown moderated a panel discussion of IRI across DOE SC program offices, featuring Xiaofeng Guo, Nuclear Physics Computing program manager; Resham Kulkarni, Biological and Environmental Research’s Biological Systems Science Division program manager; Michael Halfmoon, Fusion Energy Sciences Theory & Simulation program manager; Dava Keavney, Basic Energy Sciences X-ray and Neutron Scattering Facilities program manager; and Eric Church, working on Computational HEP & AI/ML for High Energy Physics as a detailee from Pacific Northwest National Laboratory. They touched on the complexities represented by heterogeneous computing, workflows involving AI/ML, data management and interoperability, and more, offering specific projects as examples. A lively Q&A followed with the audience.

Other IRI-focused sessions included an introduction to the proposed IRI testbed by Arjun Shankar, the interim division director for the National Center for Computational Sciences at Oak Ridge National Laboratory and coauthor of the new Federated IRI Science Testbed (FIRST): A Concept Note. Shankar invoked the 1960s-era ”request for comments” RFC process led by Vint Cerf as “an energetic, fantastic way of codesigning a collaborative infrastructure that resulted in the Internet today.” IRI too would require a drive toward interoperability before sitting down and forming a standards group, he said.

ESnet's Eli Dart gave an overview of IRI workflow patterns.

During lunchtime, Eli Dart, a network engineer in ESnet’s Science Engagement group, walked the audience through a new report he coauthored, a meta-analysis of workflow patterns across DOE Office of Science programs. Nicholas Schwarz, the lead for scientific software and data management at the Advanced Photon Source at Argonne National Laboratory, spoke about “Empowering Scientific Discovery at the Light Sources with Integrated Computing.” Frank Wüerthwein, director of the San Diego Supercomputer Center and a frequent ESnet collaborator, discussed how “IRI-Oriented Data Services” should be based on the premise that “cyberinfrastructure needs to advance in support of both the really big and the massively diverse”; Wüerthwein then gave multiple examples of the vision, ideas, and existing and future R&D that would help make this a reality.

Rachana Ananthakrishnan, executive director of Globus, presented on how this research IT platform will support IRI.

And in an hour’s worth of lightning talks organized by ASCR Research Division Acting Director Hal Finkel and Physical Scientist Margaret Lentz, 14 scientific researchers delivered rapid-fire summaries of their work embodying the kind of big-data, multi-facility collaborations that IRI is intended to streamline and accelerate. Rachana Ananthakrishnan, executive director of the University of Chicago’s Globus project, gamely closed out the day with a deep dive into how this research IT platform, which enables data sharing by national and international research institutions, might enable and inform IRI instantiations.

Exploring the IRI Testbed

Day two of Confab began with a keynote by Amedeo Perazzo, director of SLAC National Accelerator Laboratory’s Controls & Data Systems Division. Perazzo presented on his project ExaFEL, an Exascale Computing Project application and an early IRI prototype that employed real-time data processing for free electron lasers at SLAC National Accelerator Laboratory using National Energy Research Scientific Computing Center (NERSC) and ESnet’s SENSE (Software-Defined Network for End-to-end Networked Science at the Exascale; see paper), an ESnet orchestration and intelligence system that provides networked services to domain science workflows.

Left to right: ESnet Chief Technology Officer Chin Guok moderated an IRI testbed discussion with Damian Hazen from ESnet, Amber Boehnlein from Jefferson Lab, Eric Lançon from Brookhaven National Laboratory, Debbie Bard from NERSC, Kjiersten Fagnan from the Joint Genome Institute, Thomas Uram from the Argonne Leadership Computing Facility, and Arjun Shankar from Oak Ridge National Laboratory.

The middle of the day focused on exploring the nascent IRI testbed, with presentations and a discussion moderated by ESnet Chief Technology Officer Chin Guok, a member of the IRI ABA leadership team and head of ESnet’s experimental testbed efforts. Representing a diverse cross-section of facilities, seven speakers — Damian Hazen, a computer systems engineer in ESnet’s Planning and Architecture group; Jefferson Lab’s Boehnlein; Eric Lançon, chair of Brookhaven National Laboratory’s Scientific Data & Computing Center; Debbie Bard, who leads the Data Science Engagement Group at NERSC; Kjiersten Fagnan, chief informatics officer of the Joint Genome Institute; Thomas Uram, a computer scientist in the Argonne Leadership Computing Facility; and Oak Ridge’s Shankar — shared examples of the kind of scientific collaborations the IRI testbed will support and the challenges it must solve

“A lot of software is kind of garbage,” commented Boehnlein to general laughter, before moving on to the need for trusted software tools and increased cybersecurity. Fagnan talked about data management issues presented by the sheer scale of Biological and Environmental Research data, which spans 16 orders of magnitude, “from the molecule to the globe.”

Once again, the audience had many practical questions about implementing IRI for the speakers — and the speakers themselves directed questions at Brown. “Why can’t I log in everywhere with my Lab ID?” complained Fagnan when the issue of federated access was raised. “It’s been 20 years. It’s not a technology problem.”

The Role ESnet Will Play

Yusra AlSayyad, Princeton University Postdoctoral Research Associate, shared the Vera Rubin Observatory’s Cloud strategy.

In the afternoon, Sterling Smith, software development group manager for General Atomics' Fusion Division, presented “The DIII-D Superfacility Workflow,” which he said represented all three IRI workflow patterns, but especially the Time-Sensitive Pattern. “Experimental fusion science needs rapid data analysis in near real-time — and a more informed decision results in better experiments,” he emphasized. Princeton University Postdoctoral Research Associate Yusra AlSayyad shared the Vera Rubin Observatory’s Cloud Strategy, which incorporates a “data butler” data store and registry in Google Cloud, utilizing cheaper storage provided by SLAC. ESnet has worked with the project and its partners to connect the observatory in Chile to the data center in Palo Alto, California.

Xi Yang, Pilots & Prototypes network engineer, discussed ESnet's network orchestration and automation efforts

Confab’s final sessions were devoted to the innovative services ESnet has developed in order to accelerate scientific research globally. Advanced Technologies & Testbeds Acting Group Lead Eric Pouyoul described the testbed ESnet has been running since 2010, open to all and free to use, with an initial focus on high-performance networking, distributed scientific workflows, and software-defined networking. That experience and expertise will position ESnet well to host the IRI testbed.

Yatish Kumar, consulting architect with ESnet’s Planning & Innovation department, shared the ESnet SmartNIC, a field-programmable gate array (FPGA) hardware platform designed to handle “networking problems that don’t fit into the switch or router stereotype,” as he put it. ESnet and Jefferson Lab have collaborated on the ESnet JLab FPGA Accelerated Transport (EJ-FAT), a load balancer that ensures data from many detectors goes to the right nearline analysis node in a cluster.

Managing resources/processes across multiple facilities, providers, access methods, and APIs is an unreasonable amount of overhead and complexity to be shouldered by researchers developing science workflows, said ESnet Pilots & Prototypes Network Engineer Xi Yang, who showed how ESnet’s network orchestration and automation efforts tackle that problem: end-to-end automation and orchestration can allow the workflows to engage the infrastructure as a whole. Measurement & Analysis Software Engineer Andy Lake presented on “ESnet Metrics and Measurement for the Integrated Future,” focusing on ESnet’s Stardust platform and explaining that “a highly integrated infrastructure will have important questions to answer and stories to tell, all of which require a high degree of visibility in the form of integrated metrics.”

ESnet Measurement & Analysis Group Lead Ed Balas discussed what “IRI-Driven CX [Customer Experience]” will need to look like to ensure adoption, based on ESnet’s experience working to help everyone make best use of ESnet’s services — without becoming a networking expert. Balas jokingly proposed that what was needed was the “DOEccano Science Construction Set,” offering a standard set of reusable components with a well-understood but limited number of ways of joining the pieces together that will help creators focus on the bigger picture. Because “science pipelines are like sausage making,” Balas said in closing: “Find a recipe you like and know works. Adapt it to your needs in a testbed. Do it by hand first, then start to scale up, and don’t forget the original goal.”

On the post-Confab survey conducted by ESnet, attendees rated overall meeting content quality and organization very high, with an average score over 4.5 on a scale of 1 (poor) to 5 (excellent). “The quality of the presentations was fantastic,” complimented one respondent. More than 96% of respondents said they would recommend attending Confab to a colleague.

“Confab was an outstanding experience,” said Brown. “The program was really just spot-on, and most importantly, the energy and tempo were inspiring.”

ESnet is in the process of setting a date for the next Confab: if you would like to be notified by email when the next event is announced, please email confab@es.net.