Improving Data Transfer Between Argonne National Laboratory and The University of Michigan

Introduction

Data intensive science requires integration of many components to work effectively; a clean pipeline between the act of data acquisition and mobility is required to ensure productivity. Researchers at the University of Michigan work closely with collaborators at Argonne National Laboratory’s Advanced Photon Source (APS) to migrate data collected from the scientific instrumentation to analysis and processing resources. Upon testing a new device’s capabilities, and expecting to transfer several large sets of data back to the University for further study, a researcher remarked that things were not going as expected:

… we're working with the new fast detector today. The data transfer rate from here has been ~1.5 Mb/sec (about as fast as a DSL at home).Given that expectations should be much higher for the network that exists between these facilities, at the time a design that should facilitate 10Gbps flows, there was clearly a problem to locate and correct. With this report, a full investigation started with the hopes of identifying friction points in the infrastructure that could be addressed to better improve the process of scientific use of the network.

Identification of Problem Space

There are often many points of friction in a scientific workflow; it is important to evaluate the entire environment one component at a time and address all aspects. It is also important to note that fixing one item may not lead to immediate gains, and that problems of data mobility friction may be layered. It is important to evaluate:

- The construction and tuning of hosts that are involved in the process of data transmission

- The role of data transfer software, and the impact it may have on transferring information across a wide area network

- The performance of the underlying network components between facilities, including those managed by the local science groups, the campuses, regional networks, and backbone facilities

Tuning Data Movement Hosts

Hosts that are involved in data mobility must be tuned to ensure success:

- Appropriate tuning of the network interface card and operating system kernel to support high performance data transfers using TCP

- Disk and file system tuning to support data storage

The scientists performed both of these operations on their hardware, following the guidelines on the ESnet Fasterdata web site. At first glance there was not an immediate improvement, it was clear that additional problems existed somewhere in the environment.

Selecting Better Applications

A subsequent step that all users must consider is to evaluate the task of data movement, and investigate the tools they are using to perform this activity. In many cases scientific collaborations rely on tools such as SCP (Secure Copy), a built-in functionality of the Secure Shell (SSH) suite of tools, or RSYNC, a utility to control and update the contents of directories. These tools are not focused on high performance operations, and have internal limitations such as smaller data buffers and overhead from compression and encryption that impacts their ability to compete on high speed networks.

Globus Transfer, a service that utilizes the GridFTP tool, offers enhanced functionality for scientific data movement and includes the ability to graphically schedule and manage large data sets between more than 8000 locations around the world. After migrating operation to this tool, the science group still saw data mobility issues despite two attempts to remove friction.

Monitoring, Diagnosing, and Fixing Network Problems

The final investigation centered on the network that connected the two facilities, and if it was to blame for the lower than expected performance. perfSONAR is a tool that monitors networks and helps to set performance expectations using a number of end-to-end testing approaches that exercise throughput, packet loss, and latency.

The staff at the University of Michigan installed several perfSONAR nodes and set up regular tests to a number of locations including Argonne National Laboratory, ESnet, Internet2, and their regional networking provider Merit. After some time passed to establish a performance baseline, the OWAMP tool was able to provide results that are shown in Figure 1.

Figure 1: OWAMP Observed Packet Loss

The data contained in this graph shows regular packet loss, sometimes peaking to values of 3% or more during peak network usage times for the campus, and helps to explain the low throughput. TCP applications, such as RSYNC or Globus Transfer, require network paths free of packet loss. This phenomenon will reduce the overall throughput due to the algorithms inability to keep a reliable stream of data flowing. It is crucial that packet loss be removed from networks if there is any hope of supporting data mobility. The packet loss was related to increased demand for network services, and helped to motivate an increase in capacity.

The University of Michigan worked to instantiate a new network connection at 100Gbps to improve network performance, and installed this new architecture after the investigation started. Figure 2 shows how the OWAMP tool captured the routing change (adding latency, but reducing packet loss due to the increase in capacity).

Figure 2: OWAMP Observed Routing Change

Subsequently, the BWCTL throughput tool captured the return of performance after the new routing was put in place. This is seen in Figure 3 and shows how the previous packet loss kept one direction of the transfer extremely low.

BWCTL Observed Throughput Increase

Result

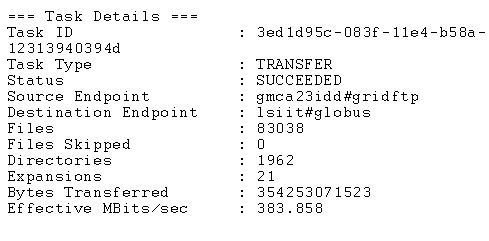

After verification of the network path, the scientists were able to re-evaluate their data transfer needs using Globus Transfer on the tuned hosts. Figure 4 shows these results.

Figure 4: Globus Transfer from UMich to ANL

This result was more than 250 times greater than what was observed at the start of the project, and represents a significant increase in productivity for the science groups and the networking teams that will continue to support them.